Portfolio-aware conversation

Richie can answer project and experience questions in a way that feels closer to an interactive briefing than a static personal site.

Case Study · Applied AI Persona

A personal AI persona built on retrieval, agentic codebase analysis, and structured memory so recruiters, collaborators, and curious users can explore my work through conversation instead of static documents.

Core AI flows: codebase memory generation and live retrieval-based answering.

Uses specialized agents for summarization, collapsing, classification, and routing.

Stores structured project memory for semantic lookup across repos and personal data.

Answers questions about projects, experience, and technical background in context.

Tech Stack

Python

Python

Pinecone

Pinecone

LangChain

LangChain

Problem

Resumes and portfolios are static. They compress experience into short bullet points, but they do not let someone ask follow-up questions, compare projects, or understand how pieces of work relate to each other.

Richie turns that static material into an interactive layer. Instead of manually reading repositories, resume lines, and portfolio pages, a user can ask direct questions and get answers grounded in structured memory.

Memory System

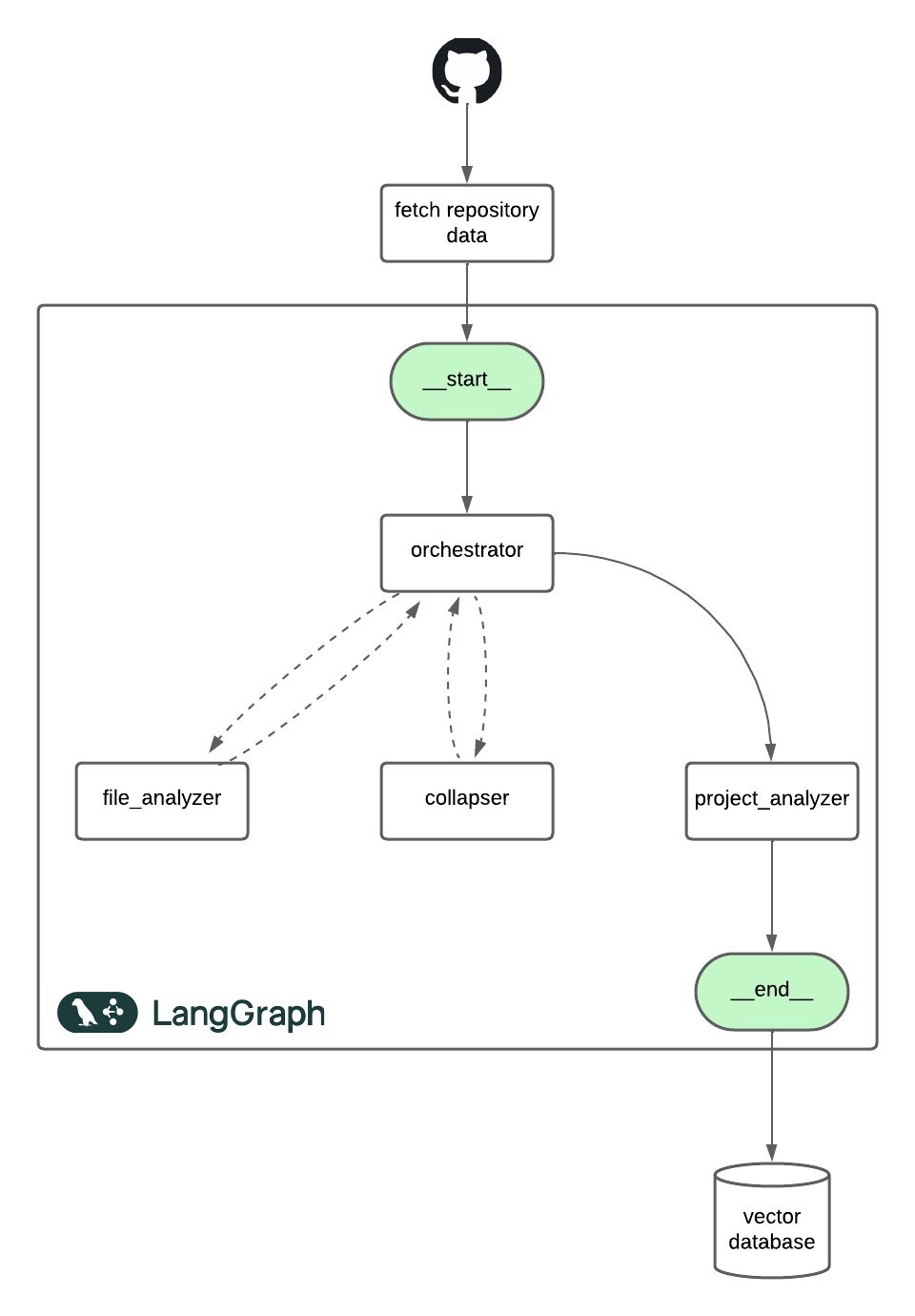

Richie’s memory layer starts with deep codebase analysis across repositories and related artifacts. The goal is not just to index files, but to build a usable representation of project intent, structure, responsibilities, and technical decisions.

The pipeline is intentionally modular. Individual files are summarized first, then larger contexts are collapsed when token limits become an issue, and finally project-level summaries are produced for storage. This keeps the system cost-aware and makes large repositories tractable.

The resulting summaries are embedded into a vector database alongside resume text, portfolio material, and other professional context. That becomes Richie’s long-term memory.

Answering Flow

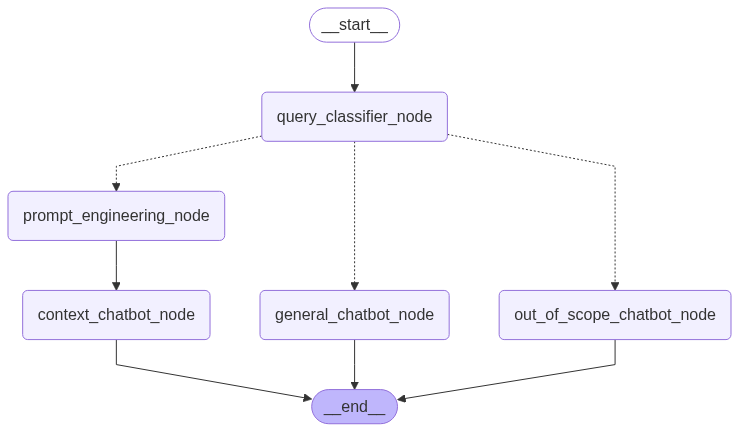

Once the memory layer is built, Richie uses retrieval-augmented generation to answer questions in real time. The system is not a single prompt over a vector store; it uses routing logic so different kinds of questions take different paths.

This structure improves relevance, avoids unnecessary token spend, and keeps the experience more stable than a single undifferentiated prompt chain.

A staged summarization pipeline lets Richie build usable memory from raw repositories without exceeding model limits.

Richie classifies, rewrites, retrieves, and answers so the final output is grounded in relevant context rather than generic model completion.

Product View

Richie can answer project and experience questions in a way that feels closer to an interactive briefing than a static personal site.

The system does not depend on one giant context dump. It builds reusable memory from modular summaries and retrieval.

The pipeline uses staged analysis and routing so large repositories and casual queries do not burn unnecessary model budget.

Because memory is built from analyzed repos, Richie can explain goals, architecture, and implementation patterns instead of only repeating keywords.

It lowers the cost of understanding technical work, which is the main reason a personal AI persona is worth building.

Richie also acts as a practical playground for agent design, retrieval systems, and memory architecture patterns.

Project links

Richie is both a usable AI interface and a case study in personal memory systems, retrieval, and agent orchestration.